University

Good news! Last year, I’ve become an actual engineer and I earned my Bachelor of Science. Wohoo. What do I do with it now? Well, the masters of course. I’m currently leaning more towards academia even though I initially wanted to head straight into industry, which was partially why I chose chemical engineering as my field. But I was lucky to have the best professor of my university to supervise me and give me confidence. He is a physicist speacialised in material science and that’s what I worked in for my thesis as well. It’s damn cool and all, but I won’t go into any details here. I just wanted to let you know where my life is at this point in time.

That was actually at the beginning of last year. Where did all of the other time went? Honestly, no idea. It flew past me like never before. But it was great. The years before that were really dull and uninteresting, mainly due to COVID-19. Then, even though everything went kind of back to normal, I was practically done with the university courses and only my bachelor project was left. So I was often at home or at work, doing said project. It was a great time, no doubt, but I was mostly doing things by myself. After I graduated, or to be more precise, while I was still writing my thesis, I started with the courses for the masters degree. It would have been far too much to attend all lectures, so I skipped most of them but still, somehow, in hindsight I have no idea how, took most exams of that semester. In the second semester (which just finished), I could focus on uni a lot more and I had tons of projects and lab courses to complete for it. It was a great time, possibly the greatest I had during my time at university so far, but the quick transition from lazily chilling at home with almost nothing to do, over to writing a thesis (not to mention doing the experiments for it) and learning for exams at the same time, and eventually to working two days a week, keeping up with six difficult lectures, working on several lab tasks, giving a tutorial in physics for freshmen every week and preparing and giving my first own lecture all within one year was extremely exhausting.

I could have postponed some of those time consuming things, and that would have made the time far less dense, which is good, but also less surprising for me. It somewhat proved my capabilities and disproved that my mental limits are reached quicky. You need such a pat on your shoulder once in a while telling you that you’re actually good at something. So here I am, renewed with solidified self-confidence.

Reading

In the little freetime I had, I’ve read the book “This is How You Lose the Time War”1 by A. El-Mohtar and M. Gladstone, and with being just short of 200 pages, I’ve done it pretty quickly. It’s categorised as science-fiction (no surprise here), but also as romance. Latter of which really turns me off in books, so I avoided this particular one for some time. Even though it was published in 2019, a local bookstore still had it on it’s shelf recently and I figured that I should give it a try nevertheless. The reviews were quite positive.

And hell, indeed, this blew my socks off and made my eyes wet simultanerously! I won’t review it here, but: You will love it even if you hate romance. You will still love it even if you hate science fiction. But what you should like, I guess, is language. I had no idea how incredibly strong a single sentence could be if it was crafted to perfection2. This book must be it, the one with the most perfectly crafted writing in it. You have to read it. It’s not that you learn a lot of lessons, question life or whatever, no, it’s simply perfectly written. You have to read it for the sake of experiencing the power of utter love through this incredible prose.

To cite emmareadstoomuch3:

I tried to trick myself into stating all the ways in which it is amazing, but as always I got overwhelmed and ran out of words to describe it. – Emma

So I better not try the same here. But one thing is certain for me. If this book will not become a classic to read 100 years into the future, I’ll be turning in my grave.

Diary

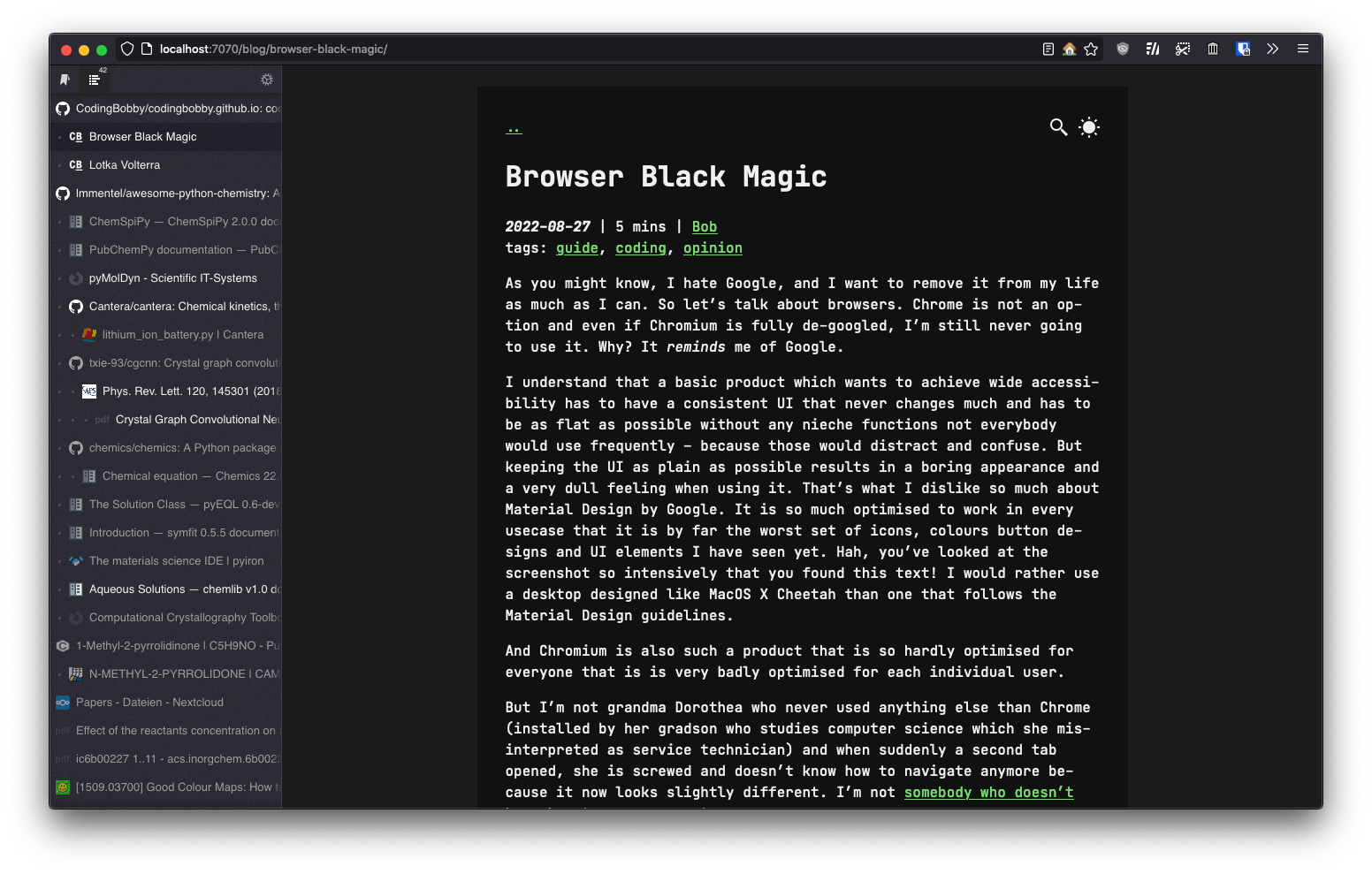

I’ve been using Obsidian for quite a while now to write notes, project plans, drafts for documents, collect sources and other useful information. It’s really good for that purpose and like how it’s purely based on markdown files that can link each other and other files or resources. Super useful and far more organised than the typical note-taking app that isn’t compatible with things outside what it’s designed for4.

Last year in September, I figured that I somewhat want to write a diary. Not the kid-in-school I-like-spaghetti and here-are-some-flowers-for-mom type of diary, and also not the restricted type that you keep in a worn booklet someone steals from you oneday. Rather something more interactive, interconnected and explorable. I figured, Obsidian would be a cool thing to try for this. The idea is to write daily entries as well as meta entries that serve simple collection of information which is not bound to a specific time and interconnect them as it happens in my mind during the day. Say for example, I do a trip to a city I haven’t been to. I might write things about the trip, things I’ve seen or have done, which museum I’ve visited and how I liked it, you name it. Then I add another file specifically for that city, and in it, I put some interesting to know facts that I’ve learned about it. For example how old the city is, which famous person lived there, a memorable building, etc. That famous person or building gets another file and so on. While writing about such one thing, I can link to its respective file where more information can be found. Pretty much like a wiki. But it wouldn’t be like any online wiki because I write it myself from my perspective; how I like things, what my opinion is about them, which people I met how and when and what I learned from them.

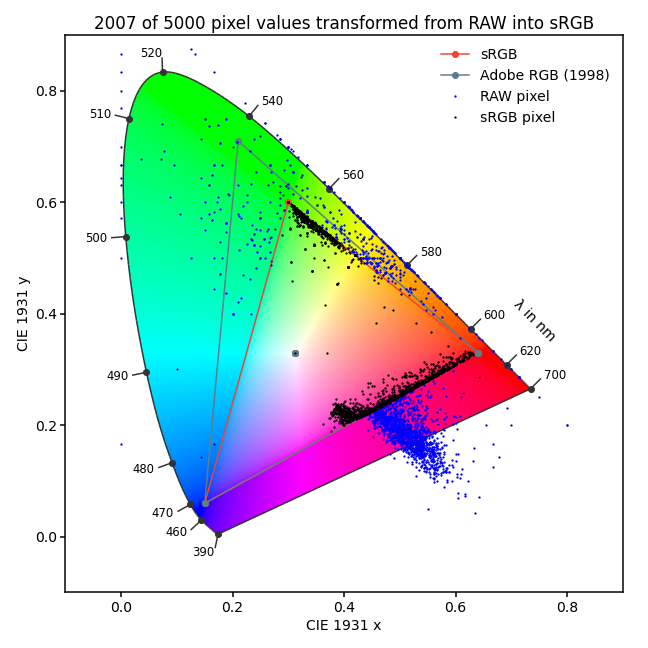

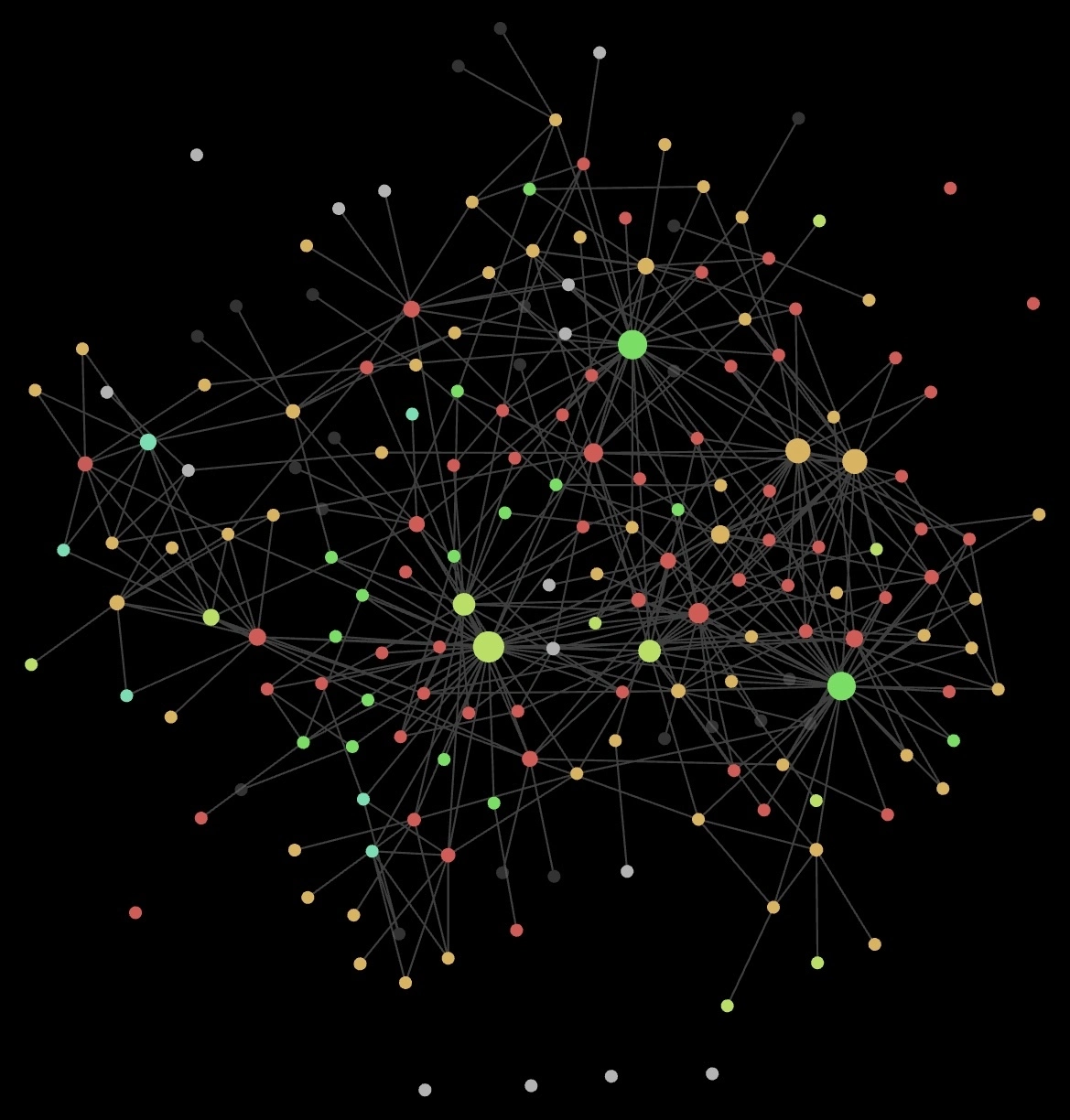

So I’ve done just that for a while now. I missed some days or even weeks and didn’t write anything, but why care? As long as I enjoy doing it, I do it, and when I don’t, I don’t. What a stupid sentence. Well, whatever. Coolest feature of Obsidian5 is the built-in graph-view which displays all your files and draws the references you made within them as connections. So here you can see what my life looked like during the past couple of months:

In that graph, the colours represent the folder each respective file is in.

I’ll explain it a bit:

Red are the diary entries – as you can see, there are less points than days since September, as I’ve skipped quite a few.

Still, the files are named like 2024-03-14.md, just as you would start a new page and place a heading in a “standard” diary notebook.

In that entry, I can link to other files.

Say I want to mention London as a city I visited; then I would simply type [[London]] and it would automatically link to the file London.md which can be placed elsewhere and also contain similar links.

The size of the points represent how many of such connections there are to other files.

Cities are shown in lime. The largest point is the city I live in, and right next to it is where my family is at home. During the winter and christmas holiday, I was there for a few weeks. Locations are green. Those can be many things for me, like districts or streets in a city, buildings, parks, or other places you can visit. In the lower right corner is my university for example and the other large point at the top is where I work.

Now, the most interesting part (for me): Orange points represent people. They are mostly people I’ve actually met on a day, but I’ve also added a few which I spend some time with – through their book which I’ve read, for example. The two large ones on the right are close friends that also visit my university and coincidentally work at the same place. So we naturally spend a lot of time together and I mention them pretty often in my daily entries. The slightly separated cluster you can see on the far left comes from people of the local photography community. We have met a few times on the street or in gallery exhibitions (such events are blue, by the way). I wish to see them more often though as those activities are not only fun and relaxing but evidently, as you can see by the visible separation from the rest, let me escape the everyday chaos.

I think this graph view is a really nice way to visualise what I do, where I do it and with whom. This is probably just the start – imagine looking at 10 years worth of interconnections!

So there we have it. I hope you have it as well, the nice time to live in and appreciate and if not, maybe read a little book to cheer you up. The world is shit and unfair, but remember, we can shape it.

-

Goodreads page of “This is How You Lose the Time War”. ↩

-

One of my favourite sections is probably this: “Flowers grow far away on a planet they’ll call Cephalus, and these flowers bloom once a century, when the living star and its black-hole enter conjunction. I want to fix you a bouquet of them, gathered across eight hundred thousand years, so you can draw our whole engagement in a single breath, all the ages we’ve shaped together.” Even as I’m copying this from the book right now, my tears are knocking on the door again. ↩

-

Emma’s review of the book. ↩

-

It’s weird and probably personal to me. Whenever I find a new and innovative app for taking notes, I initially like it, but eventually forget about it again. In order to actually use it over a long time, the solution needs to be so omnipresent, straigtforward and simple, that it becomes natural to remember where you noted something. I sometimes have a slight feeling that I wanted to remind myself of something, wanted to check someting later again or use a specific value for something. My current solution is Apple’s note app where I don’t organise anything and have literally just two files. One as a grocery shopping list where I keep all entries and just tick-on when I just bought it or tick-off when I used it up and need it again. And a second one for everything else. It’s a crazy mess, but the only way that works for me. Everything else I’ve tried does not, and so for the stupid reason that I simply forget that the note file even exists. I would need an additional catalogue to find where I noted what, but when noting where I noted something, I could just as well note it directly there – and that’s what I do in the one messy file. I hope it makes sense what I just wrote. Nevertheless, Obsidian somewhat sneaked into this and proved to work for me to a degree. Definitely not for the purpose I use Apple notes, but for a more meta note- and idea-keeping system. It didn’t replace anything for me, but rather provides an additional way of dumping my brain. ↩

-

Obsidian has many other cool features, some achievable through community plugins and some inherited from the plain markdown format. Embedding photos easily without typing long links manually, PDFs can be embedded (it’s possible to scroll through them within the rendered markdown), even LaTeX formulas are rendered, GPX-tracks can be displayed on a map, hastags used inside the text, and the search function all work super well. Listing all backlinks that point to the current file, and the file tree structure that is flattened in the background for easily linking files without any paths make the app really solid. ↩

A very good historical summary of what it took to discover chaos, study chaos and explain chaos.

In Chaos, James Gleick not only describes what happened back then, but lets you discover the simply mind-boggling science yourself.

A very good historical summary of what it took to discover chaos, study chaos and explain chaos.

In Chaos, James Gleick not only describes what happened back then, but lets you discover the simply mind-boggling science yourself. Steven Strogatz is an exceptionally good lecturer at Cornell University as well as a very good writer that explains strange and complex concepts to you like not many other people can do.

This book goes a step beyond “simple” chaos and shows you how nature manages to synchronise its elemental randomness back into order.

Steven Strogatz is an exceptionally good lecturer at Cornell University as well as a very good writer that explains strange and complex concepts to you like not many other people can do.

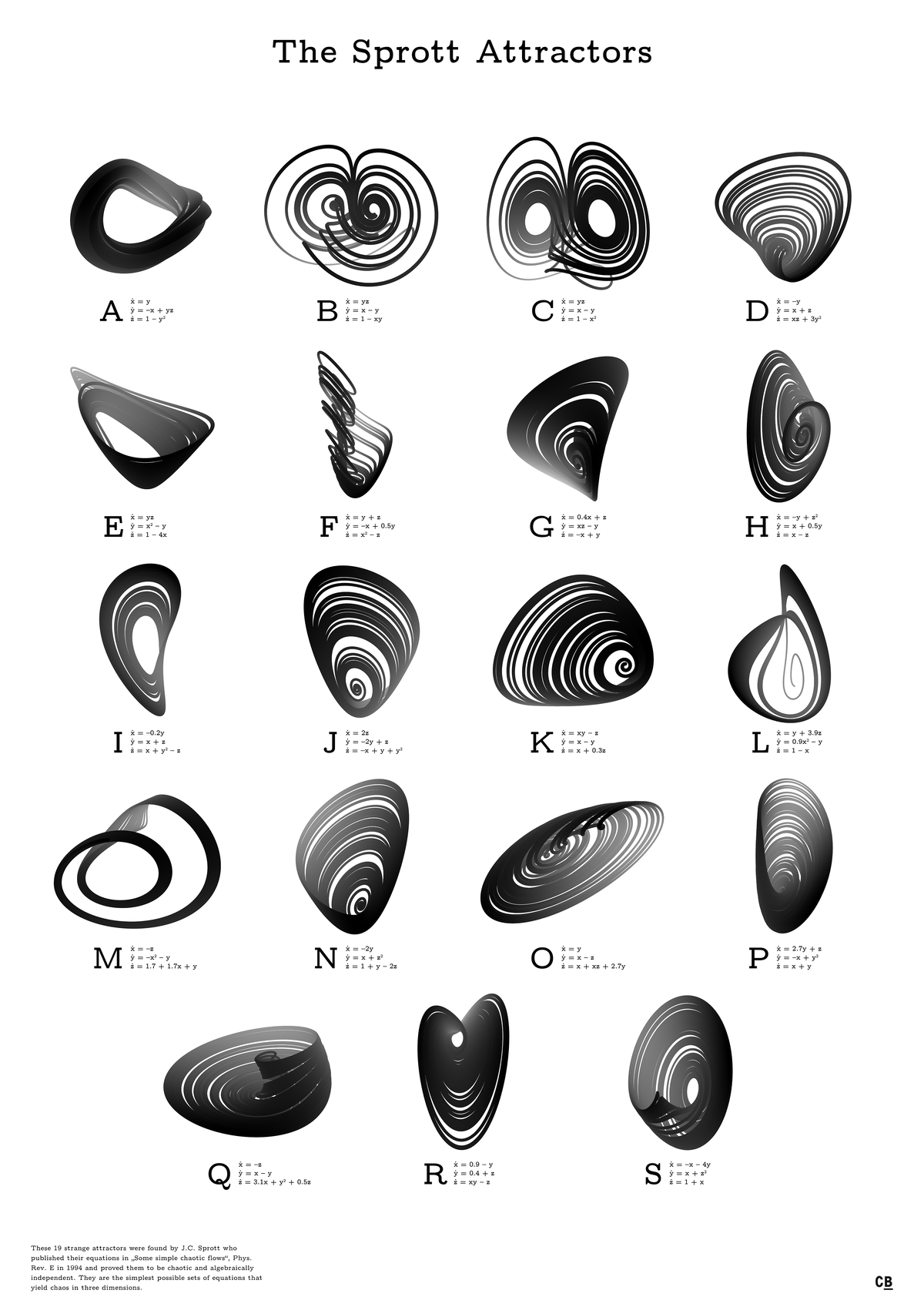

This book goes a step beyond “simple” chaos and shows you how nature manages to synchronise its elemental randomness back into order. Computers, Pattern, Chaos and Beauty is my favourite of these books.

It inspired me to write my own little programs to compute fractal images and attractors renders, which you can also find

Computers, Pattern, Chaos and Beauty is my favourite of these books.

It inspired me to write my own little programs to compute fractal images and attractors renders, which you can also find  Detailed and highly in-depth descriptions of fractal rendering methods and algorithms.

Eight specialized researchers bring together their knowledge about all important aspects of the simulation and modelling of fractal growth, generation of random as well as deterministic fractals and patterns and show how to create digital representations of nature based on the science of fractals.

Many pseudocodes are included.

Detailed and highly in-depth descriptions of fractal rendering methods and algorithms.

Eight specialized researchers bring together their knowledge about all important aspects of the simulation and modelling of fractal growth, generation of random as well as deterministic fractals and patterns and show how to create digital representations of nature based on the science of fractals.

Many pseudocodes are included. This could also be included in the

This could also be included in the  Relatively little focus on chaos theory itself, but a great support for understanding how a system becomes dynamic and what types of systems there are scattered across different fields of mathematics.

This basic knowledge helps to understand more advanced theories discussed in more specialised

books.

Relatively little focus on chaos theory itself, but a great support for understanding how a system becomes dynamic and what types of systems there are scattered across different fields of mathematics.

This basic knowledge helps to understand more advanced theories discussed in more specialised

books. In my opinion, the standard work in this field.

With this, you get over 800 pages of condensed knowledge about basically every aspect of chaos theory.

The beauty you’ll find in this book is not necessarily in visually appealing images, but in the mathematics of this enthralling science.

In my opinion, the standard work in this field.

With this, you get over 800 pages of condensed knowledge about basically every aspect of chaos theory.

The beauty you’ll find in this book is not necessarily in visually appealing images, but in the mathematics of this enthralling science. Possibly the greatest and single most important publication about fractal images and what this field in mathematics is about.

Benoît Mandelbrot himself shows every aspect of fractals he knew at the time and draws a connection between them.

Depending on the edition, this book might cost you a fortune.

Possibly the greatest and single most important publication about fractal images and what this field in mathematics is about.

Benoît Mandelbrot himself shows every aspect of fractals he knew at the time and draws a connection between them.

Depending on the edition, this book might cost you a fortune. Actually beautiful and high-quality pictures of fractals and chaotic objects in a large-format book with great explanations of what is depicted and some theory behind it.

Actually beautiful and high-quality pictures of fractals and chaotic objects in a large-format book with great explanations of what is depicted and some theory behind it. A great timeline of important discoveries in mathematics.

From 150 Million B.C. to 2007, Cliff Pickover shows 250 milestones with a great image and provides a short summary of what it is about and why it was important.

The hardback edition is particularly nice to flip through.

A great timeline of important discoveries in mathematics.

From 150 Million B.C. to 2007, Cliff Pickover shows 250 milestones with a great image and provides a short summary of what it is about and why it was important.

The hardback edition is particularly nice to flip through.